Throughput is defined as the number of items that complete per time period. Throughput is one of the three variables in Little’s Law, which is a model that can be used to understand how to manage flow through a system. Examples of throughput:

- Number of product backlog items completed per sprint

- Number of emails sent per day

- Number of projects completed last year

Throughput lives in relationship with cycle time and work-in-process (WIP) in Little’s Law:

Throughput = WIP / Cycle TimeIf cycle time reduces, and WIP is constant, then throughput increases.

If WIP reduces, and cycle time remains constant, then throughput increases.

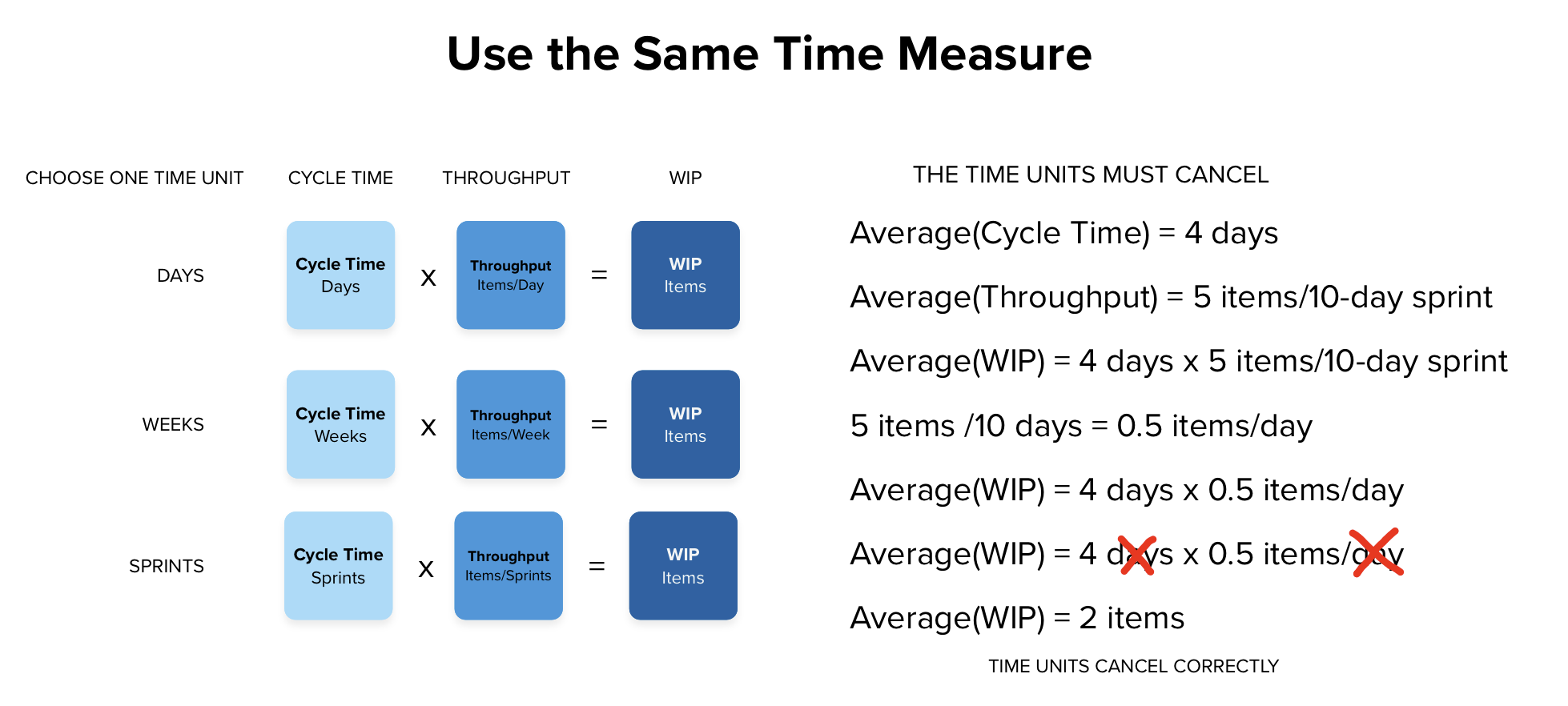

When measuring throughput, it is important to measure it using the same time interval by which cycle time is measured. That way, the time intervals can be canceled out when calculating one variable from the other two.

Reduction of cycle time is very important when managing flow.

Cycle Time = Service Time + Waiting TimeLonger cycle times mean that work items live in the system longer. The longer an item is alive, or considered work-in-process (WIP), the more vulnerable it is to changes in the environment or reprioritization decisions. Those sorts of impacts on the ability to finish work are called variability. Variability has a bigger impact as cycle time increases.

The best way to control cycle time is through control of WIP. The maximum throughput of any production line will always be the maximum throughput of the bottleneck (system constraint). WIP is easier controlled than bottlenecks and WIP control is less expensive to implement.

Improving Throughput

By addressing the bottleneck, throughput can be increased while WIP remains constant. This will have the added effect of lowering cycle time.

This means that throughput and cycle time are both highly sensitive to automation. When a step in the process requires manual labor and tends to bottleneck, that step is a candidate to be automated. Automation tends to remove the constraint on capacity and allow work to flow rapidly through that point.

It is important to consider the order with which bottlenecks are addressed. To avoid creating queues, it is best to address bottlenecks downstream in the system. Addressing upstream bottlenecks will nave little effect on system performance if the primary constraint is downstream.

In Kanban, start on the right side of the board to find constraints to remove to increase throughput.

Local Throughput Gains != System Gains

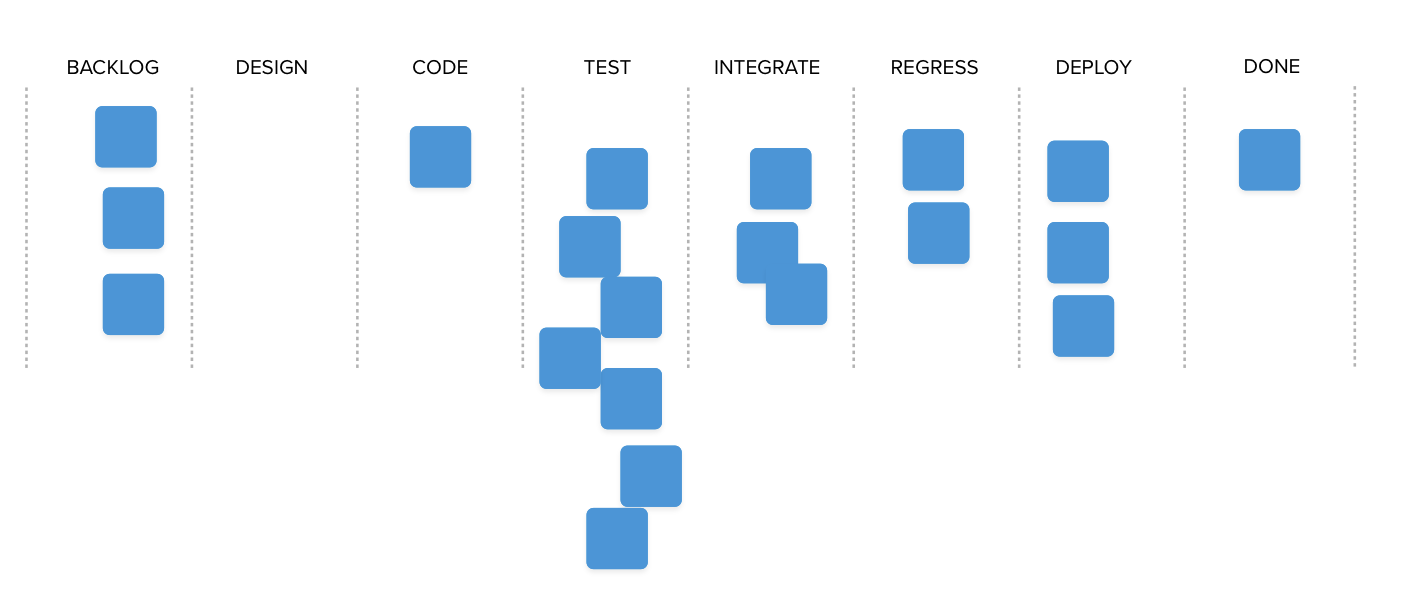

In the illustration below, the throughput of the CODE step was greatly increased by adding Agentic programming practices and automation. By addressing the bottleneck within the CODE step, throughput of CODE increased, but downstream TEST and other steps still have constraints on the entire system.

CODE is producing more items per day. TEST is not. The system throughput has not improved.

A queue grows in front of TEST activities. How will it be addressed?

- Increase the capacity of TEST by hiring more people? This may address the TEST bottleneck, but will likely cause a queue to form at INTEGRATION. System throughput will remain the same.

- Reroute lower-value work around TEST to reduce the load on the bottleneck? This will probably have the same effect as the previous step.

- Violate WIP limits and continue sending work to TEST and allow the queue to grow?

The best solution would be to limit the throughput of any upstream work state to the maximum throughput of the system bottleneck.

Notice also that the CODE work state is beginning to starve. It no longer has any queue, and upstream steps cannot produce enough work to keep it filled.

Results:

- Improving one step in the process without addressing the whole system will not increase delivery throughput overall.

- Improving one step in the process will likely cause slowdowns as WIP limits are violated in celebration of increased throughput at CODE.

Manage the Whole System

Managers of this system should start with the system constraint and improve system throughput by relieving that bottleneck first. As the downstream bottlenecks are identified and addressed, the improvements in CODE throughput will begin to show improved delivery throughput. It may prove valuable to work right to left through the process (assuming it begins on the left).

Throughput gains are not unlimited, however, as long as human review of machine produced results is necessary. The AI may produce many sets to review and choose from, and humans may have an upper threshold of how much throughput they can handle integrating and deciding among these options.

Upstream practices must also be reformed. If an upstream bottleneck exists with lower throughput than CODE, CODE will starve for work and remain idle waiting for work.

Managing throughput is managing an entire system. One step cannot be improved resulting in systemic improvement. The entire flow must be managed.

The Age of AI

AI is rapidly developing the ability to increase throughput of human activity in software development. Organizations that adopt AI mid-stream of their process will find increasing pressure on downstream systems, increased queueing, and predictable declines in overall delivery. The system remediations that were called for during so-called agile adoption a decade before are now mandatory. AI is revealing that flow management is no longer optional but is instead required to obtain the benefits in throughput and cycle time that AI has to offer.